In 2022, I (anna) wrote a proposal to propose a user-owned base model trained using private data rather than publicly crawled from the internet.I think that while it is possible to train basic models using public data (e.g. Wikipedia, 4Chan), to take them to the next level, you need high-quality private data that only exists in what you need permission or login to accessIn isolated platforms (such as Twitter, personal messages, company information).

This prediction is beginning to come into effect.Companies like Reddit and Twitter have realized the value of their platform data, so they locked in the developer API (1, 2) to prevent other companies from freely using their text data to train the underlying model.

This has changed dramatically compared to two years ago.Venture capitalist Sam Lessin summed up the change: “[The platform] just throws this trash behind, no one takes care of it, and then all of a sudden, you’re like, oh, damn, that trash is gold, right? We got itA lot. We have to lock the bin. “For example, GPT-3 is trained on WebText2, which summarizes the text in all Reddit commit links, which have at least 3 votes for favors (3, 4).This is no longer possible after using Reddit’s new API.

The Internet is becoming increasingly unopened, and isolated platforms build larger walls to protect their valuable training data.

Although developers can no longer access this data on a large scale, individuals can still access and export their own data across platforms due to data privacy regulations (5, 6).The fact that the platform locks in the developer API, while individual users still have access to their own data provides an opportunity: Can 100 million users export their platform data to create the world’s largest treasure house of data?This treasure house of data will aggregate all user data collected by large tech companies and other companies that are often reluctant to share.This will be the largest and most comprehensive training dataset to date, 100 times larger than the dataset used to train today’s leading fundamental models.1

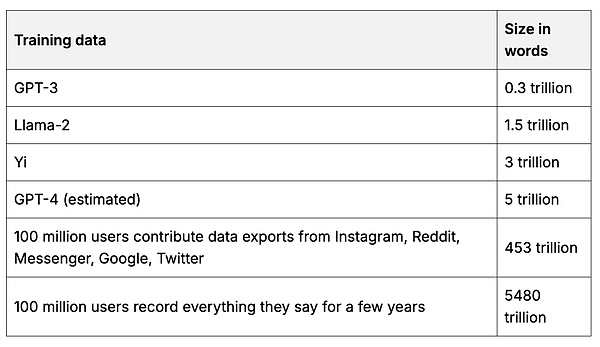

Table 1. Data

A rough estimate of comparing the basic model training dataset with the sample user dataset.Source and calculation.

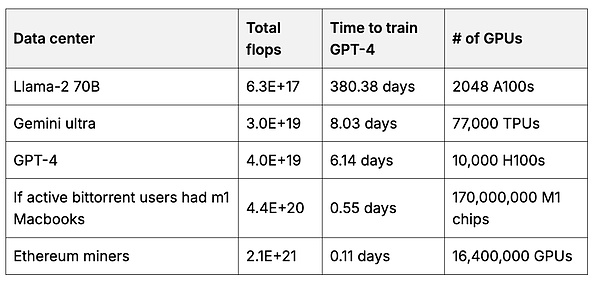

The user can then create a basic model that the user has, which uses more data than any company can aggregate.Training the basic model requires a lot of GPU calculations.But each user can use their own hardware to help train a small portion of the model, and then merge these parts together to create a larger and more powerful model (7, 8, 9).2 When incentives are appropriate, users can gather a large number of calculations.For example, the total amount of computation for Ethereum miners is 50 times more than they are used to train leading basic models.

Table 2. Calculation

The total number of floating-point operations (float per second = sum of “thinking” speeds) for the data center used to train the underlying model compared to the Ethereum miner GPU.3 with the source of calculation.

Users who contribute to the model will collectively own and manage the model.They can get paid when using the model, and even get paid proportionally based on how much their data improves the model.Collectives can set rules of use, including who can access the model and which controls should be implemented.Perhaps users in each country will create their own models that represent their ideology and culture.Or maybe a country is not the right dividing line, we will see a world where each network country has its own underlying model based on its member data.

I encourage you to take the time to think about what part of the underlying model you want to have and what training data you can contribute from the platform you use.You may have more data than you realize – your research papers, unpublished artwork, your Google documentation, your dating profile, your medical records, your Slack messages.One way to bring this data together is through a personal server, which allows you to easily use your private data with your local LLM.In the future, your personal server can also train part of the user base model you have.

The underlying models tend to be monopolistic because they require substantial up-front investment in data and computing.It’s easy to choose the simple option: Use the open source model that lags behind generations, the remnants of large AI companies, as we can.But we should not be satisfied with falling behind generations and eating only leftovers!As users, we should create our own best model – we have the data and computing power to achieve this.

As AI is increasingly capable of completing valuable economic work, a huge economic transformation is happening.Large tech companies have trained AI models based on your public work, writing, artwork, photos and other data, and others, and start making billions of dollars a year (1).They are now chasing data you can’t get on the public internet, buying your private data from companies like Reddit so they can increase their AI revenue to trillions of dollars per year (2, 3).

Shouldn’t you have a part of an AI model created by your data help?

This is where the data DAO works.Data DAO is a decentralized entity that allows users to aggregate and manage their data and reward contributors with specific tokens representing ownership of a specific dataset.It’s kind of like a data union.These datasets can replicate or even surpass datasets sold by large tech companies for hundreds of millions of dollars ( 4 ).DAO has full control over the dataset and has the option to rent or sell anonymous copies.For example, Reddit data can even be used to sow new, user-owned platforms, including friends, your past posts and other data that can be used at any time on the new platform.

If you are interested in technical details: Data DAO has two main components: 1) on-chain governance, obtaining tokens through data contributions; 2) secure server, encrypted using public-private key pairs, community-owned data setsResides in this server.To contribute, you first need to verify the data to prove ownership and estimate its value.Then, use the server’s public key to encrypt the data in the browser and store the encrypted data in the cloud.The data is decrypted only when the DAO approves the proposal to grant access.For example, it could allow AI companies to rent data to train models.You can read more about the Vana network architecture here, which aims to implement collective ownership of datasets and models.

Data DAO not only benefits users, but also drives the development of AI, making it possible to build AI like open source software, benefiting everyone who contributes.Open source AI is struggling to find viable business models: it is very expensive to pay GPUs, data and researchers.And, once the model is trained, if it is open source, these costs cannot be recovered.The technical architecture of data DAO can be applied to model DAO, where users and developers can contribute data, calculations, and research in exchange for ownership of the model.

The default option in today’s society is to allow large tech companies to get our data and use it to train the AI models that work for us.They profit from these AI models because we are replaced by models trained with our data.This is a very bad deal for society, but a good thing for big tech companies.The only way to prevent this from happening is to take collective action.Data is currency, and collective data is power.I encourage you to participate: The world’s first data focused on Reddit data DAO is online today on the Vana network.By breaking the data moat controlled by the privileged minority, Data DAO has opened up a path to the internet owned by real users.