Author: Vitalik; Compilation: Deng Tong, Bitchain Vision

Thank you for the rapid feedback and review of Dankrad Feist, Caspar Schwarz-Schilling and Francesco.

I sit here to write this article on the last day of the interaction of developers in the Kenya Ethereum. We made great progress in implementing and solving the important technical details of Ethereum improved in the upcoming Ethereum., Verkle Tree transition and decentralization methods store historical records in the background of EIP 4444.From my own perspective, Ethereum’s development speed and our ability to provide large and important functions are continuously enhanced. These functions can significantly improve the experience of node operators and (L1 and L2) users.

Ethereum client team work together to deliver PECTRA Devnet

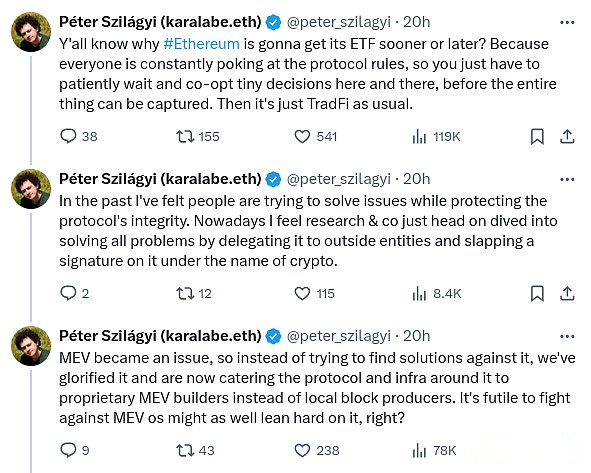

In view of the enhancement of technical capabilities, an important question that needs to be raised is: Are we moving towards the right goal?A series of dissatisfied tweets recently released by the long -term Geth core developer Peter Szilagyi prompted us to think about this problem:

These concerns make sense.This is a concern for many people in the Ethereum community.I personally worried about these issues many times.However, I don’t think the situation is as despair as implied by Peter’s tweets.On the contrary, many problems have been solved by the ongoing protocol function, and many other problems can be solved by making very realistic adjustments to the current roadmap.

To understand what this means in practice, let us review three examples provided by Peter one by one.These problems are generally concerned about many communities, and it is important to solve these problems.

MEV and builder dependence

In the past, the Ethereum block was created by miners, and they used relatively simple algorithms to create blocks.Users send transactions to public P2P networks, usually called “Mempool” (or “TXPOOL”).Miners listen to the memory pool, accept effective transactions and pay fees.They include transactions that can be performed. Without enough space, they will be prioritized according to the highest priority order.

This is a very simple system, and it is very friendly to decentralization: as a miner, you only need to run the default software, and you can get the same level of income from the same level from a high professional mine.However,Around 2020, people began to use so -called miners to extract value (MEV): Only through complicated strategies can we obtain income, these strategies understand the activities that occur within various DEFI protocols.

For example, consider decentralized exchanges like Uniswap.Suppose at time T, centralized exchanges and USD/ETH exchange rates on UNISWAP are $ 3,000.In T+11, the USD/ETH exchange rate of the centralized exchange rose to $ 3005.But Ethereum has no next block.Time T+12 is indeed the case.No matter who created the block, their first transactions can be a series of Uniswap purchases, buying all available ETH on Uniswap at a price of $ 3,000 to $ 3004.This is an additional income, called MEV.There are similar problems in applications other than DEX.The Flash Boys 2.0 paper published in 2019 has introduced it in detail.

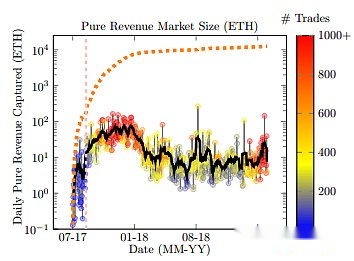

The chart in the Flash Boys 2.0 paper shows the income amount that can be obtained using the above methods.

The question is that this breaks the reason why mining (block proposal after 2022) can be “fair”: Now, large participants with better ability optimization can be obtained in each block moreGood return.

Since then, there have been controversy between the two strategies.MEV minimum and MEV isolation.There are two forms of MEV to minimize: (i) Actively develop Uniswap’s MEV -free alternative (such as COWSWAP), and (ii) to build an internal technology in the agreement, such as encrypted memory pools to reduce the information available for block producers, thereby reducing themCan get income.In particular, the encrypted memory pool can prevent strategies such as sandwiches attack. This attack places transactions before and after user transactions, so that they can use them economically (“first trading”).

The working principle of MEV isolation is to accept MEVHowever, it is trying to limit the impact on the centralization of the pledge by dividing the market into two participants: the verifier is responsible for proof and proposed blocks, but the task of selecting the block content will pass the auction protocol.Individual pledges no longer need to worry about optimizing DEFI arbitrage; they just join the auction agreement and accept the highest bid.This is called the separation of the proposal/builder (PBS).This method already has a precedent in other industries: a main reason for restaurants to maintain such decentralization is that they often rely on considerable suppliers to carry out various businesses, and these businesses do have a huge scale economy.So far, PBS has been quite successful in ensuring that small verifications and large -scale verificationrs are in a fair competitive environment, at least for MEV.However, it brings another question: the tasks of which transactions include more concentrated.

My opinion on this has always been that MEV minimization is good. We should pursue it (I personally use COWSWAP!) —Ly, Even close to zero.Therefore, we also need a certain MEV isolation.This produces an interesting task: how do we make the “MEV isolation box” as small as possible?How can we give the builders as little as possible, while still allowing them to absorb the role of optimizing arbitrage and other forms of MEV collection?

If the builder has the right to completely exclude the transaction from the block, it will be easy to attack.Suppose you have mortgage debt positions (CDP) in the DEFI protocol, and asset support that has fallen rapidly by price.You want to increase mortgages or exit CDP.Malicious builders may try to string up and refuse to include your transactions, thereby delaying the transaction until the price falls to a forced liquidation of your CDP.If this happens, you will have to pay a huge fine, and the builder will get a large part.So how do we prevent the builders from excluding transactions and completing such attacks?

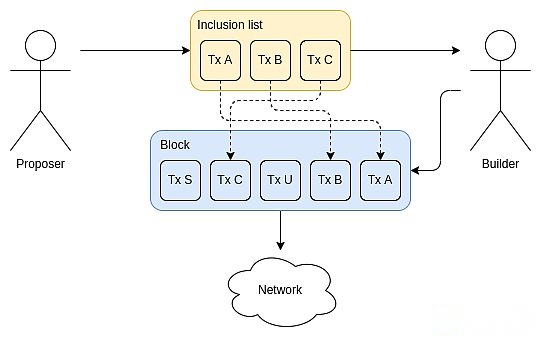

This is the place where the list contains the list.

Source:ethresear.ch

Including the list allowed block proposals (that is, stakeholders) to choose to enter the transaction required for the block.The builders can still sort or insert their own transactions, but they must include the transaction of the proposal.In the end, it was modified to restrict the next block instead of the current block.In any case, they will deprive the builders’ ability to fully launch the transaction.

MEV is a complex problem; even the description above has missed many important differences.As you said,“You may not be looking for MEV, but MEV is looking for you”EssenceEthereum researchers have been very consistently committed to the goal of “minimizing the isolation box”, and minimize the harm that the builder may cause(For example, a way to attack or delay transactions as attacking specific applications).

In other words, I do think we can go further.Historically, including lists are often considered a “special situation function”: Generally, you do not consider them, but in case of malicious builders start doing crazy things, they will give you the “second” path “.. This attitude is reflected in the current design decision: in the current EIP, the GAS limit contains a list is about 2.1 million.Blocks, the role of the builder is regarded as adding some transactions to collect MEV auxiliary functions.

I think the idea of this direction -truly promoting the isolation box as small as possible -very interesting, I agree with this direction.This is the transformation with the “2021 era philosophy”: In the 2021 era philosophy, we are more keen on this idea: Since we now have the builders, we can “overload” their functions, so that they can make them in a more complicated way in a more complicated way.User service, such as.By supporting the ERC-4337 cost market.In this new concept, the transaction verification part of the ERC-4337 must be included in the agreement.Fortunately, the ERC-4337 team has become more and more enthusiastic about this direction.

Summarize:MEV’s thought has returned to the direction of the authorized block producer, including giving block producers to directly ensure that user transactions have been included.The account abstraction proposal has returned to the direction of eliminating the dependence of centralized relatives and even bundles.However, there is a good argument that we are not far away. I think it is very popular to promote the development process in this direction.

Liquid

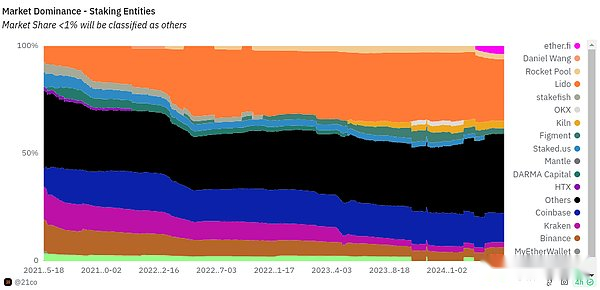

Today, the proportion of separate pledges in all Ethereum pledges is relatively small, and most pledge is completed by various providers -some central operators and other DAOs, such as Lido and RocketPool.

我做了自己的研究 —— 各种民意调查 、调查、面对面对话,提出问题“为什么你 —— 特别是你 —— 今天不单独下注?” 对我来说,到目前为止,A strong separate pledge ecosystem is the first choice for my pledged Ethereum, and one of the best things in Ethereum is that we actually try to support a strong pledged ecosystem, rather than just succumbing to the commission.However, we are still far from this result.In my public opinion survey and investigation, there are some consistent trends:

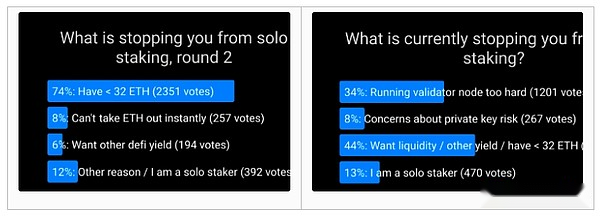

Most people who are not pledged alone attribute their main reasons to minimum 32 ETH.

Among those who propose other reasons, the biggest is the technical challenges of running and maintaining the verification device node.

ETH’s loss of instant availability, the security risk of the “hot” private key, and the loss of the ability to participate in the DEFI protocol are all major but smaller problems.

Farcaster’s polls show that people do not carry out the main reasons for separate pledge.

The pledge research needs to solve two key issues:

How do we solve these concerns?

Although there are effective solutions to most problems, if most people still do not want to be individually, then, how can we maintain the stability and robustness of the agreement to resist the attack?

Many undergoing research and development projects are designed to solve these problems:

The Verkle tree plus EIP-4444 allows the pledge node to run at a very low hard disk.In addition, they allow the pledge node to simplify almost immediately, which greatly simplifies the setting process and operations from one realization to another.They also reduce the data bandwidth required for each state access to make Ethereum light clients more feasible.

Studies (for example, these proposals) allow a larger authentication set (the smallest pledge value), while reducing the method of consensus node overhead.These ideas can be implemented as part of the ultimate part of the single groove.This will also make the light client safer because they will be able to verify a full set of signatures instead of relying on the Synchronous Committee).

Despite the growing history, the cost and difficulty of the Ethereum client optimization continuously reduces the cost and difficulty of running verification device nodes.

Studies on the upper limit of punishment may reduce concerns about private key risks and enable pledges to pledge their ETH to the DEFI protocol at the same time (if they are willing).

0x01 withdrawal voucher allows the pledged to set the ETH address to the withdrawal address.This makes the decentralized pledge pool more feasible, making it more advantageous than centralized pledge pools.

However, we can still do more.Theoretically allows verificationrs to withdraw faster: even if the authenticator sets at each final determination (ie, once every time), several percentage points will occur, and Casper FFG is still safe.Therefore, if we work hard, we can greatly shorten the cycle.If we want to greatly reduce the smallest deposit scale, we can make difficult decisions and weigh them in other directions.For example, if we will increase the final time by 4 times, the smallest deposit scale will decrease by 4 times.The final nature of the single groove will be solved by completely surpassing the “each pledker to participate in each era” model.

Another important part of the entire problem is the economics of pledge.One key question is: Do we want to be a relatively niche event, or do we want everyone or almost everyone to pledge their ETH?If everyone is pledged, what responsibilities do we want everyone to bear?If people finally assign their responsibilities because of laziness, they may eventually lead to centralization.There are important and profound philosophical issues here.The wrong answer may cause Ethereum to take a centralized road, and “re -create the traditional financial system through additional steps”; the correct answer can create a glorious model of a successful ecosystem, with extensive and diverse independence independenceThe pledged and highly decentralized pledge pool.These issues involve Ethereum’s core economy and values, so we need more diverse participation.

Node hardware requirements

Many of the key issues of Ethereum decentralized are finally attributed to a problem that defines the blockchain for ten years: how do we want to easily run nodes and how to achieve it?

Today, it is difficult to run nodes.Most people don’t do this.On the laptop I used to write this article, I have a RETH node that occupies 2.1 TB -is already the result of heroic software engineering and optimization.I need to buy an additional 4 TB hard disk and put it in my laptop to store the node.We all want to run nodes easier.In my ideal world, people will be able to run nodes on mobile phones.

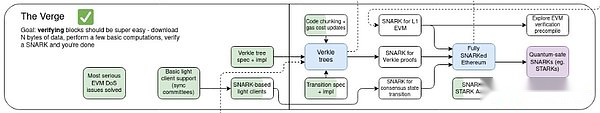

As I wrote above, the EIP-4444 and Verkle Tree are two key technologies that make us closer to this ideal.If both are realized, the hardware demand of nodes may eventually be reduced to less than one hundred auspicious bytes. If we fully eliminate historical storage responsibilities (may only apply to the infertility node), it may be close to zero.Type 1 ZK-EVM will eliminate your own needs for running EVM, because you can simply verify the evidence of the correct execution.In my ideal world, we stack all these technologies together, and even the Ethereum browser extended wallet (such as Metamask, Rabby) also has a built -in node to verify these proofs, perform data usability samples, and ensure that the chain is correct.

The above vision is usually called “The Verge”.

This is well known and understood, even those who are worried about the size of Ethereum nodes.However, there is an important concern: if we have unloaded the state of maintenance and the responsibility of providing proof, isn’t this a centralized vector?Even if they can’t cheat data by providing invalid data, aren’t they relying too much that they violate the principle of Ethereum?

This recent version of concerns is the discomfort of many people for EIP-4444: if the conventional Ethereum node no longer needs to store old historical records, who needs?A common answer is: there must be enough big participants (such as block browsers, exchanges, layers 2) with motivation to hold these data, and compared with 100 PB stored by Wayback Machine, Ethereum chain is very small.EssenceTherefore, it is ridiculous to think that any history will actually be lost.

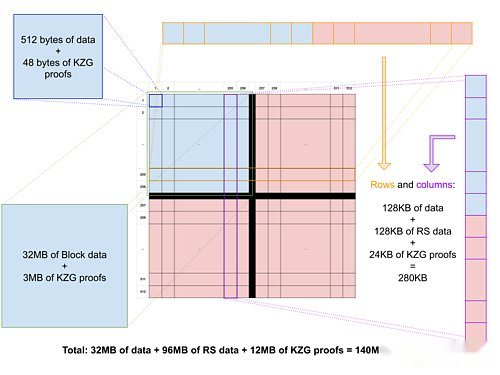

However, this argument depends on a small number of large participants.In my trust model classification, this is a hypothesis in N, but N is very small.This has its tail risk.One thing we can do is to store the old historical records in the peer network, and each node only stores a small part of the data.This kind of network will still be replicated enough to ensure stability: each data will have thousands of copies, and in the future we can use the cornerstone code (in fact, the historical record is put into the BLOB of the EIP-4844 style, andThis has been built -in tactical codes) to further improve stability.

Blob has a correction code between Blob and Blob.The easiest way to provide all history of Ethereum with over -stability storage is likely to put the beacon and execution block into the BLOB.Image source: codex.storage

For a long time, this work has been in a secondary position.The portal network does exist, but in fact it does not get attention to the importance of its future importance in Ethereum.Fortunately,Now people have a strong interest in the momentum of investing more resources into the minimized portal of distributed storage and historical access.We should work together to implement the EIP-4444 as soon as possible, and match the powerful decentralized point-to-point network to store and retrieve the old historical records.

For state and ZK-EVM, this distributed method is more difficult.To build an efficient block, you only need to have a complete state.In this case, I personally tend to adopt a pragmatic method: we define and insist on having a certain degree of hardware required for “node to do everything”, which is higher than that of simple verification nodes (Ideally, constantly constantly constantly constantly constantlyThe cost chain is reduced, but it is still low enough, and enthusiasts can afford it.We rely on 1 assumption in N to ensure that N is quite large.

ZK-EVM proves that it may be the most tricky part, Real-time ZK-EVM proofer may need to be more powerful than archive nodes, even with progress like Binius and the worst boundary boundary between multidimensional GAS.We can work hard on the distributed proof of the network, each of which is responsible for the proof, such as one percent of the block execution, and then the block producer only needs to aggregate a hundred certificates at the end.Prove that the aggregation tree can provide more help.But if this cannot work well, another compromise is that the hardware requirements allowed to be proven have become higher, but to ensure that “node do everything” can directly verify the Ethereum block (no certificate), the speed is fast enough and effective enough to be effective enough.Participate in the network.

Summarize

In my opinion, as long as there is a market mechanism or a zero -knowledge proof system to force centralized participants to do honesty, Ethereum thought in the 2021 era is indeed accustomed to transferring responsibilities to minority large participants.Such systems usually run well under normal circumstances, but catastrophic failures occur in the worst case.

At the same time, I think it is necessary to emphasize that the current Ethereum protocol proposal has greatly deviated from this model and takes more seriously to the needs of truly decentralized networks.The ideas of no state nodes, MEV relief, one -time slice endality and similar concepts have gone further in this direction.A year ago, people seriously considered the idea of data availability sampling through the relay as a half -center node.This year, we no longer need to do these things, Peerdas has made surprising strong progress.

However, in all the three central issues I mentioned above and many other important issues, we can do a lot of things to go further in this direction.Helios has made great progress in providing “real light clients” for Ethereum.Now, we need to include it in the Ethereum wallet by default, and let the RPC provider provide proof and its results in order to verify it and extend the light client technology to the second floor agreement.If Ethereum expands the roadmap centered on rollup, the second layer needs to obtain the same security and decentralized guarantee as the first layer.In the world centered on Rollup, there are many other things we should take more seriously; scattered and efficient cross -L2 bridges are one of many examples.Many DAPPs obtain logs through centralized protocols, because Ethereum ’s log scanning becomes too slow.We can improve this through a decentralized decentralized sub -agreement; this is a suggestion on how to do this.

There are almost unlimited blockchain projects aiming at “we can be super fast, we will consider decentralization later”.I think Ethereum should not join this ranks.Ethereum L1 can and of course be a powerful basic layer of the second floor project that uses a large -scale method, using Ethereum as a pillar of decentralization and security.Even the method centered on the second layer requires that the first layer itself has sufficient scalability to handle a large number of operations.butWe should deeply respect the unique characteristics of Ethereum, and continue to work hard to maintain and improve these characteristics with the expansion of Ethereum.